Skinner’s Box Experiment (Behaviorism Study)

We receive rewards and punishments for many behaviors. More importantly, once we experience that reward or punishment, we are likely to perform (or not perform) that behavior again in anticipation of the result.

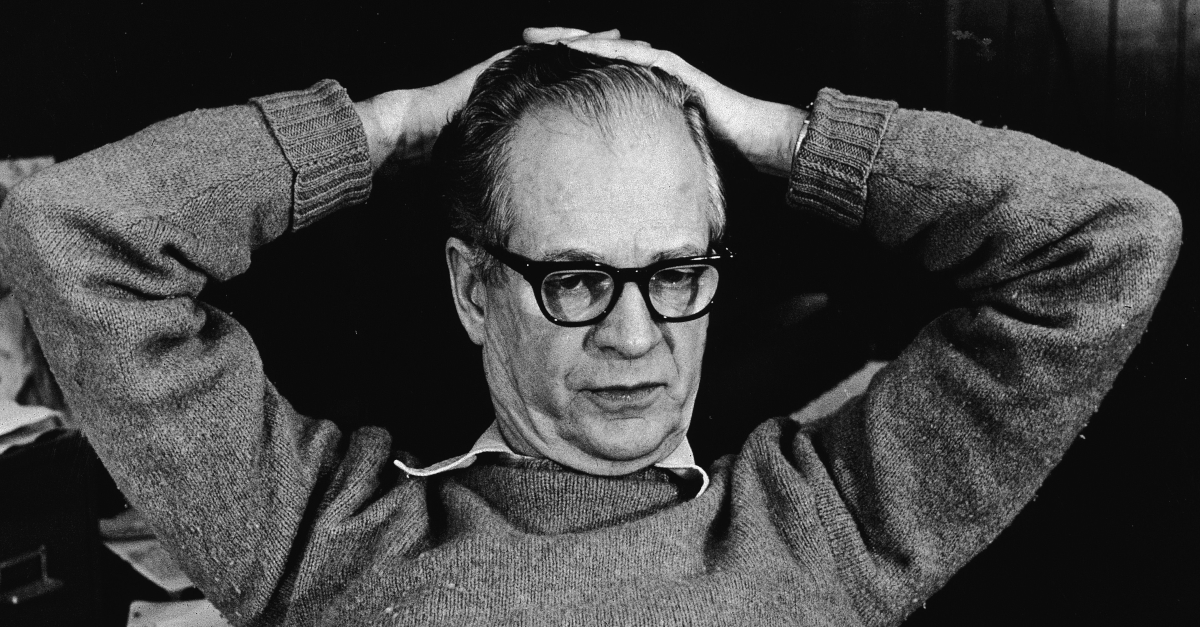

Psychologists in the late 1800s and early 1900s believed that rewards and punishments were crucial to shaping and encouraging voluntary behavior. But they needed a way to test it. And they needed a name for how rewards and punishments shaped voluntary behaviors. Along came Burrhus Frederic Skinner , the creator of Skinner's Box, and the rest is history.

What Is Skinner's Box?

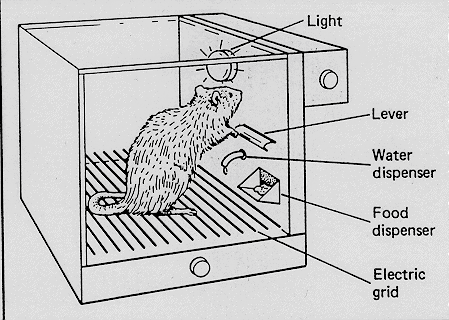

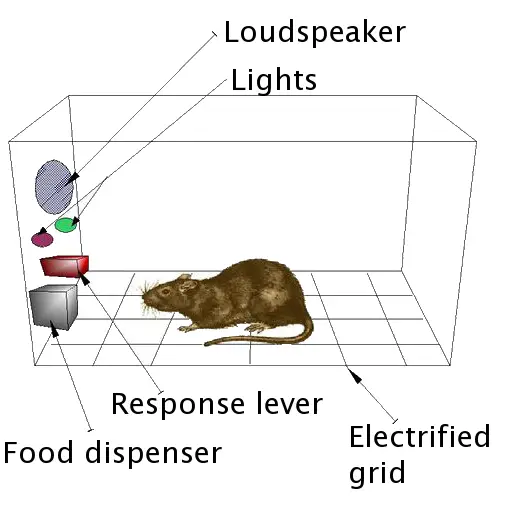

The "Skinner box" is a setup used in animal experiments. An animal is isolated in a box equipped with levers or other devices in this environment. The animal learns that pressing a lever or displaying specific behaviors can lead to rewards or punishments.

This setup was crucial for behavioral psychologist B.F. Skinner developed his theories on operant conditioning. It also aided in understanding the concept of reinforcement schedules.

Here, "schedules" refer to the timing and frequency of rewards or punishments, which play a key role in shaping behavior. Skinner's research showed how different schedules impact how animals learn and respond to stimuli.

Who is B.F. Skinner?

Burrhus Frederic Skinner, also known as B.F. Skinner is considered the “father of Operant Conditioning.” His experiments, conducted in what is known as “Skinner’s box,” are some of the most well-known experiments in psychology. They helped shape the ideas of operant conditioning in behaviorism.

Law of Effect (Thorndike vs. Skinner)

At the time, classical conditioning was the top theory in behaviorism. However, Skinner knew that research showed that voluntary behaviors could be part of the conditioning process. In the late 1800s, a psychologist named Edward Thorndike wrote about “The Law of Effect.” He said, “Responses that produce a satisfying effect in a particular situation become more likely to occur again in that situation, and responses that produce a discomforting effect become less likely to occur again in that situation.”

Thorndike tested out The Law of Effect with a box of his own. The box contained a maze and a lever. He placed a cat inside the box and a fish outside the box. He then recorded how the cats got out of the box and ate the fish.

Thorndike noticed that the cats would explore the maze and eventually found the lever. The level would let them out of the box, leading them to the fish faster. Once discovering this, the cats were more likely to use the lever when they wanted to get fish.

Skinner took this idea and ran with it. We call the box where animal experiments are performed "Skinner's box."

Why Do We Call This Box the "Skinner Box?"

Edward Thorndike used a box to train animals to perform behaviors for rewards. Later, psychologists like Martin Seligman used this apparatus to observe "learned helplessness." So why is this setup called a "Skinner Box?" Skinner not only used Skinner box experiments to show the existence of operant conditioning, but he also showed schedules in which operant conditioning was more or less effective, depending on your goals. And that is why he is called The Father of Operant Conditioning.

How Skinner's Box Worked

Inspired by Thorndike, Skinner created a box to test his theory of Operant Conditioning. (This box is also known as an “operant conditioning chamber.”)

The box was typically very simple. Skinner would place the rats in a Skinner box with neutral stimulants (that produced neither reinforcement nor punishment) and a lever that would dispense food. As the rats started to explore the box, they would stumble upon the level, activate it, and get food. Skinner observed that they were likely to engage in this behavior again, anticipating food. In some boxes, punishments would also be administered. Martin Seligman's learned helplessness experiments are a great example of using punishments to observe or shape an animal's behavior. Skinner usually worked with animals like rats or pigeons. And he took his research beyond what Thorndike did. He looked at how reinforcements and schedules of reinforcement would influence behavior.

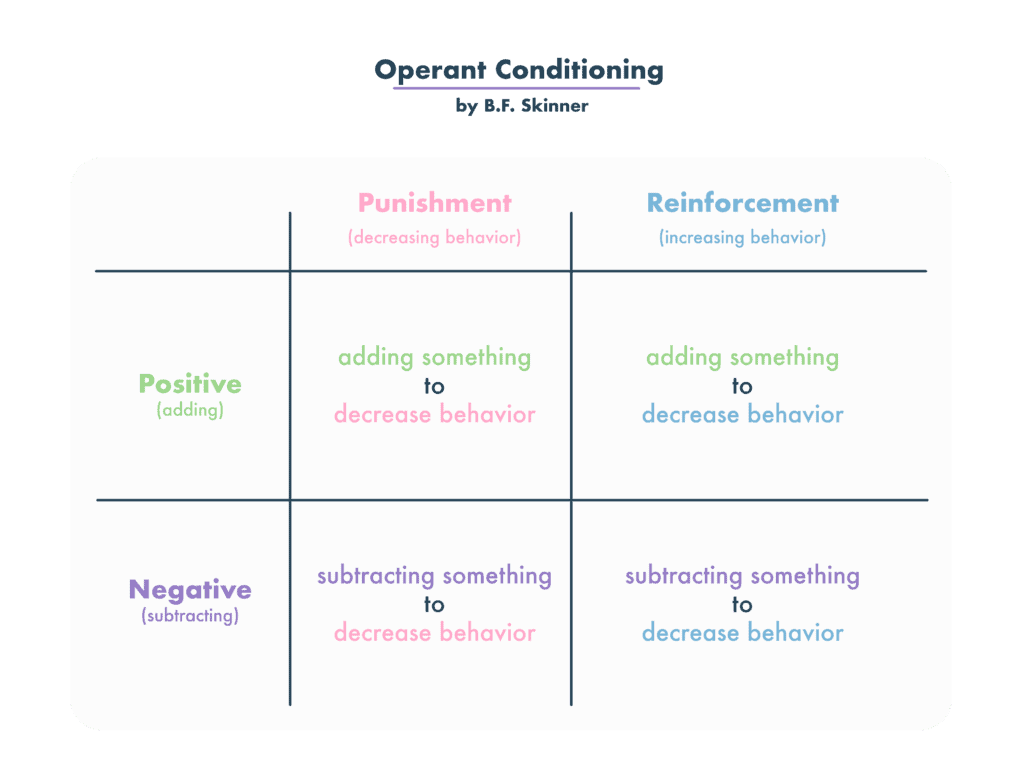

About Reinforcements

Reinforcements are the rewards that satisfy your needs. The fish that cats received outside of Thorndike’s box was positive reinforcement. In Skinner box experiments, pigeons or rats also received food. But positive reinforcements can be anything added after a behavior is performed: money, praise, candy, you name it. Operant conditioning certainly becomes more complicated when it comes to human reinforcements.

Positive vs. Negative Reinforcements

Skinner also looked at negative reinforcements. Whereas positive reinforcements are given to subjects, negative reinforcements are rewards in the form of things taken away from subjects. In some experiments in the Skinner box, he would send an electric current through the box that would shock the rats. If the rats pushed the lever, the shocks would stop. The removal of that terrible pain was a negative reinforcement. The rats still sought the reinforcement but were not gaining anything when the shocks ended. Skinner saw that the rats quickly learned to turn off the shocks by pushing the lever.

About Punishments

Skinner's Box also experimented with positive or negative punishments, in which harmful or unsatisfying things were taken away or given due to "bad behavior." For now, let's focus on the schedules of reinforcement.

Schedules of Reinforcement

We know that not every behavior has the same reinforcement every single time. Think about tipping as a rideshare driver or a barista at a coffee shop. You may have a string of customers who tip you generously after conversing with them. At this point, you’re likely to converse with your next customer. But what happens if they don’t tip you after you have a conversation with them? What happens if you stay silent for one ride and get a big tip?

Psychologists like Skinner wanted to know how quickly someone makes a behavior a habit after receiving reinforcement. Aka, how many trips will it take for you to converse with passengers every time? They also wanted to know how fast a subject would stop conversing with passengers if you stopped getting tips. If the rat pulls the lever and doesn't get food, will they stop pulling the lever altogether?

Skinner attempted to answer these questions by looking at different schedules of reinforcement. He would offer positive reinforcements on different schedules, like offering it every time the behavior was performed (continuous reinforcement) or at random (variable ratio reinforcement.) Based on his experiments, he would measure the following:

- Response rate (how quickly the behavior was performed)

- Extinction rate (how quickly the behavior would stop)

He found that there are multiple schedules of reinforcement, and they all yield different results. These schedules explain why your dog may not be responding to the treats you sometimes give him or why gambling can be so addictive. Not all of these schedules are possible, and that's okay, too.

Continuous Reinforcement

If you reinforce a behavior repeatedly, the response rate is medium, and the extinction rate is fast. The behavior will be performed only when reinforcement is needed. As soon as you stop reinforcing a behavior on this schedule, the behavior will not be performed.

Fixed-Ratio Reinforcement

Let’s say you reinforce the behavior every fourth or fifth time. The response rate is fast, and the extinction rate is medium. The behavior will be performed quickly to reach the reinforcement.

Fixed-Interval Reinforcement

In the above cases, the reinforcement was given immediately after the behavior was performed. But what if the reinforcement was given at a fixed interval, provided that the behavior was performed at some point? Skinner found that the response rate is medium, and the extinction rate is medium.

Variable-Ratio Reinforcement

Here's how gambling becomes so unpredictable and addictive. In gambling, you experience occasional wins, but you often face losses. This uncertainty keeps you hooked, not knowing when the next big win, or dopamine hit, will come. The behavior gets reinforced randomly. When gambling, your response is quick, but it takes a long time to stop wanting to gamble. This randomness is a key reason why gambling is highly addictive.

Variable-Interval Reinforcement

Last, the reinforcement is given out at random intervals, provided that the behavior is performed. Health inspectors or secret shoppers are commonly used examples of variable-interval reinforcement. The reinforcement could be administered five minutes after the behavior is performed or seven hours after the behavior is performed. Skinner found that the response rate for this schedule is fast, and the extinction rate is slow.

Skinner's Box and Pigeon Pilots in World War II

Yes, you read that right. Skinner's work with pigeons and other animals in Skinner's box had real-life effects. After some time training pigeons in his boxes, B.F. Skinner got an idea. Pigeons were easy to train. They can see very well as they fly through the sky. They're also quite calm creatures and don't panic in intense situations. Their skills could be applied to the war that was raging on around him.

B.F. Skinner decided to create a missile that pigeons would operate. That's right. The U.S. military was having trouble accurately targeting missiles, and B.F. Skinner believed pigeons could help. He believed he could train the pigeons to recognize a target and peck when they saw it. As the pigeons pecked, Skinner's specially designed cockpit would navigate appropriately. Pigeons could be pilots in World War II missions, fighting Nazi Germany.

When Skinner proposed this idea to the military, he was met with skepticism. Yet, he received $25,000 to start his work on "Project Pigeon." The device worked! Operant conditioning trained pigeons to navigate missiles appropriately and hit their targets. Unfortunately, there was one problem. The mission killed the pigeons once the missiles were dropped. It would require a lot of pigeons! The military eventually passed on the project, but cockpit prototypes are on display at the American History Museum. Pretty cool, huh?

Examples of Operant Conditioning in Everyday Life

Not every example of operant conditioning has to end in dropping missiles. Nor does it have to happen in a box in a laboratory! You might find that you have used operant conditioning on yourself, a pet, or a child whose behavior changes with rewards and punishments. These operant conditioning examples will look into what this process can do for behavior and personality.

Hot Stove: If you put your hand on a hot stove, you will get burned. More importantly, you are very unlikely to put your hand on that hot stove again. Even though no one has made that stove hot as a punishment, the process still works.

Tips: If you converse with a passenger while driving for Uber, you might get an extra tip at the end of your ride. That's certainly a great reward! You will likely keep conversing with passengers as you drive for Uber. The same type of behavior applies to any service worker who gets tips!

Training a Dog: If your dog sits when you say “sit,” you might treat him. More importantly, they are likely to sit when you say, “sit.” (This is a form of variable-ratio reinforcement. Likely, you only treat your dog 50-90% of the time they sit. If you gave a dog a treat every time they sat, they probably wouldn't have room for breakfast or dinner!)

Operant Conditioning Is Everywhere!

We see operant conditioning training us everywhere, intentionally or unintentionally! Game makers and app developers design their products based on the "rewards" our brains feel when seeing notifications or checking into the app. Schoolteachers use rewards to control their unruly classes. Dog training doesn't always look different from training your child to do chores. We know why this happens, thanks to experiments like the ones performed in Skinner's box.

Related posts:

- Operant Conditioning (Examples + Research)

- Edward Thorndike (Psychologist Biography)

- Schedules of Reinforcement (Examples)

- B.F. Skinner (Psychologist Biography)

- Fixed Ratio Reinforcement Schedule (Examples)

Reference this article:

About The Author

Free Personality Test

Free Memory Test

Free IQ Test

PracticalPie.com is a participant in the Amazon Associates Program. As an Amazon Associate we earn from qualifying purchases.

Follow Us On:

Youtube Facebook Instagram X/Twitter

Psychology Resources

Developmental

Personality

Relationships

Psychologists

Serial Killers

Psychology Tests

Personality Quiz

Memory Test

Depression test

Type A/B Personality Test

© PracticalPsychology. All rights reserved

Privacy Policy | Terms of Use

Operant Conditioning: What It Is, How It Works, and Examples

Saul McLeod, PhD

Editor-in-Chief for Simply Psychology

BSc (Hons) Psychology, MRes, PhD, University of Manchester

Saul McLeod, PhD., is a qualified psychology teacher with over 18 years of experience in further and higher education. He has been published in peer-reviewed journals, including the Journal of Clinical Psychology.

Learn about our Editorial Process

Olivia Guy-Evans, MSc

Associate Editor for Simply Psychology

BSc (Hons) Psychology, MSc Psychology of Education

Olivia Guy-Evans is a writer and associate editor for Simply Psychology. She has previously worked in healthcare and educational sectors.

On This Page:

Operant conditioning, or instrumental conditioning, is a theory of learning where behavior is influenced by its consequences. Behavior that is reinforced (rewarded) will likely be repeated, and behavior that is punished will occur less frequently.

By the 1920s, John B. Watson had left academic psychology, and other behaviorists were becoming influential, proposing new forms of learning other than classical conditioning . Perhaps the most important of these was Burrhus Frederic Skinner. Although, for obvious reasons, he is more commonly known as B.F. Skinner.

Skinner’s views were slightly less extreme than Watson’s (1913). Skinner believed that we do have such a thing as a mind, but that it is simply more productive to study observable behavior rather than internal mental events.

Skinner’s work was rooted in the view that classical conditioning was far too simplistic to fully explain complex human behavior. He believed that the best way to understand behavior is to examine its causes and consequences. He called this approach operant conditioning.

How It Works

Skinner is regarded as the father of Operant Conditioning, but his work was based on Thorndike’s (1898) Law of Effect . According to this principle, behavior that is followed by pleasant consequences is likely to be repeated, and behavior followed by unpleasant consequences is less likely to be repeated.

Skinner introduced a new term into the Law of Effect – Reinforcement. Behavior that is reinforced tends to be repeated (i.e., strengthened); behavior that is not reinforced tends to die out or be extinguished (i.e., weakened).

Skinner (1948) studied operant conditioning by conducting experiments using animals, which he placed in a “ Skinner Box, ” which was similar to Thorndike’s puzzle box.

A Skinner box, also known as an operant conditioning chamber, is a device used to objectively record an animal’s behavior in a compressed time frame. An animal can be rewarded or punished for engaging in certain behaviors, such as lever pressing (for rats) or key pecking (for pigeons).

Skinner identified three types of responses, or operant, that can follow behavior.

- Neutral operants : Responses from the environment that neither increase nor decrease the probability of a behavior being repeated.

- Reinforcers : Responses from the environment that increase the probability of a behavior being repeated. Reinforcers can be either positive or negative.

- Punishers : Responses from the environment that decrease the likelihood of a behavior being repeated. Punishment weakens behavior.

We can all think of examples of how reinforcers and punishers have affected our behavior. As a child, you probably tried out a number of behaviors and learned from their consequences.

For example, when you were younger, if you tried smoking at school, and the chief consequence was that you got in with the crowd you always wanted to hang out with, you would have been positively reinforced (i.e., rewarded) and would be likely to repeat the behavior.

If, however, the main consequence was that you were caught, caned, suspended from school, and your parents became involved, you would most certainly have been punished, and you would consequently be much less likely to smoke now.

Positive Reinforcement

B. F. Skinner’s theory of operant conditioning describes positive reinforcement. In positive reinforcement, a response or behavior is strengthened by rewards, leading to the repetition of the desired behavior. The reward is a reinforcing stimulus.

Primary reinforcers are stimuli that are naturally reinforcing because they are not learned and directly satisfy a need, such as food or water.

Secondary reinforcers are stimuli that are reinforced through their association with a primary reinforcer, such as money, school grades. They do not directly satisfy an innate need but may be the means. So a secondary reinforcer can be just as powerful a motivator as a primary reinforcer.

Skinner showed how positive reinforcement worked by placing a hungry rat in his Skinner box. The box contained a lever on the side, and as the rat moved about the box, it would accidentally knock the lever. Immediately, it did so that a food pellet would drop into a container next to the lever.

After being put in the box a few times, the rats quickly learned to go straight to the lever. The consequence of receiving food if they pressed the lever ensured that they would repeat the action again and again.

Positive reinforcement strengthens a behavior by providing a consequence an individual finds rewarding. For example, if your teacher gives you £5 each time you complete your homework (i.e., a reward), you will be more likely to repeat this behavior in the future, thus strengthening the behavior of completing your homework.

The Premack principle is a form of positive reinforcement in operant conditioning. It suggests using a preferred activity (high-probability behavior) as a reward for completing a less preferred one (low-probability behavior).

This method incentivizes the less desirable behavior by associating it with a desirable outcome, thus strengthening the less favored behavior.

Negative Reinforcement

Negative reinforcement is the termination of an unpleasant state following a response.

This is known as negative reinforcement because it is the removal of an adverse stimulus which is ‘rewarding’ to the animal or person. Negative reinforcement strengthens behavior because it stops or removes an unpleasant experience.

For example, if you do not complete your homework, you give your teacher £5. You will complete your homework to avoid paying £5, thus strengthening the behavior of completing your homework.

Skinner showed how negative reinforcement worked by placing a rat in his Skinner box and then subjecting it to an unpleasant electric current which caused it some discomfort. As the rat moved about the box it would accidentally knock the lever.

Immediately, it did so the electric current would be switched off. The rats quickly learned to go straight to the lever after being put in the box a few times. The consequence of escaping the electric current ensured that they would repeat the action again and again.

In fact, Skinner even taught the rats to avoid the electric current by turning on a light just before the electric current came on. The rats soon learned to press the lever when the light came on because they knew that this would stop the electric current from being switched on.

These two learned responses are known as Escape Learning and Avoidance Learning .

Punishment is the opposite of reinforcement since it is designed to weaken or eliminate a response rather than increase it. It is an aversive event that decreases the behavior that it follows.

Like reinforcement, punishment can work either by directly applying an unpleasant stimulus like a shock after a response or by removing a potentially rewarding stimulus, for instance, deducting someone’s pocket money to punish undesirable behavior.

Note : It is not always easy to distinguish between punishment and negative reinforcement.

They are two distinct methods of punishment used to decrease the likelihood of a specific behavior occurring again, but they involve different types of consequences:

Positive Punishment :

- Positive punishment involves adding an aversive stimulus or something unpleasant immediately following a behavior to decrease the likelihood of that behavior happening in the future.

- It aims to weaken the target behavior by associating it with an undesirable consequence.

- Example : A child receives a scolding (an aversive stimulus) from their parent immediately after hitting their sibling. This is intended to decrease the likelihood of the child hitting their sibling again.

Negative Punishment :

- Negative punishment involves removing a desirable stimulus or something rewarding immediately following a behavior to decrease the likelihood of that behavior happening in the future.

- It aims to weaken the target behavior by taking away something the individual values or enjoys.

- Example : A teenager loses their video game privileges (a desirable stimulus) for not completing their chores. This is intended to decrease the likelihood of the teenager neglecting their chores in the future.

There are many problems with using punishment, such as:

- Punished behavior is not forgotten, it’s suppressed – behavior returns when punishment is no longer present.

- Causes increased aggression – shows that aggression is a way to cope with problems.

- Creates fear that can generalize to undesirable behaviors, e.g., fear of school.

- Does not necessarily guide you toward desired behavior – reinforcement tells you what to do, and punishment only tells you what not to do.

Examples of Operant Conditioning

Positive Reinforcement : Suppose you are a coach and want your team to improve their passing accuracy in soccer. When the players execute accurate passes during training, you praise their technique. This positive feedback encourages them to repeat the correct passing behavior.

Negative Reinforcement : If you notice your team working together effectively and exhibiting excellent team spirit during a tough training session, you might end the training session earlier than planned, which the team perceives as a relief. They understand that teamwork leads to positive outcomes, reinforcing team behavior.

Negative Punishment : If an office worker continually arrives late, their manager might revoke the privilege of flexible working hours. This removal of a positive stimulus encourages the employee to be punctual.

Positive Reinforcement : Training a cat to use a litter box can be achieved by giving it a treat each time it uses it correctly. The cat will associate the behavior with the reward and will likely repeat it.

Negative Punishment : If teenagers stay out past their curfew, their parents might take away their gaming console for a week. This makes the teenager more likely to respect their curfew in the future to avoid losing something they value.

Ineffective Punishment : Your child refuses to finish their vegetables at dinner. You punish them by not allowing dessert, but the child still refuses to eat vegetables next time. The punishment seems ineffective.

Premack Principle Application : You could motivate your child to eat vegetables by offering an activity they love after they finish their meal. For instance, for every vegetable eaten, they get an extra five minutes of video game time. They value video game time, which might encourage them to eat vegetables.

Other Premack Principle Examples :

- A student who dislikes history but loves art might earn extra time in the art studio for each history chapter reviewed.

- For every 10 minutes a person spends on household chores, they can spend 5 minutes on a favorite hobby.

- For each successful day of healthy eating, an individual allows themselves a small piece of dark chocolate at the end of the day.

- A child can choose between taking out the trash or washing the dishes. Giving them the choice makes them more likely to complete the chore willingly.

Skinner’s Pigeon Experiment

B.F. Skinner conducted several experiments with pigeons to demonstrate the principles of operant conditioning.

One of the most famous of these experiments is often colloquially referred to as “ Superstition in the Pigeon .”

This experiment was conducted to explore the effects of non-contingent reinforcement on pigeons, leading to some fascinating observations that can be likened to human superstitions.

Non-contingent reinforcement (NCR) refers to a method in which rewards (or reinforcements) are delivered independently of the individual’s behavior. In other words, the reinforcement is given at set times or intervals, regardless of what the individual is doing.

The Experiment:

- Pigeons were brought to a state of hunger, reduced to 75% of their well-fed weight.

- They were placed in a cage with a food hopper that could be presented for five seconds at a time.

- Instead of the food being given as a result of any specific action by the pigeon, it was presented at regular intervals, regardless of the pigeon’s behavior.

Observation:

- Over time, Skinner observed that the pigeons began to associate whatever random action they were doing when food was delivered with the delivery of the food itself.

- This led the pigeons to repeat these actions, believing (in anthropomorphic terms) that their behavior was causing the food to appear.

- In most cases, pigeons developed different “superstitious” behaviors or rituals. For instance, one pigeon would turn counter-clockwise between food presentations, while another would thrust its head into a cage corner.

- These behaviors did not appear until the food hopper was introduced and presented periodically.

- These behaviors were not initially related to the food delivery but became linked in the pigeon’s mind due to the coincidental timing of the food dispensing.

- The behaviors seemed to be associated with the environment, suggesting the pigeons were responding to certain aspects of their surroundings.

- The rate of reinforcement (how often the food was presented) played a significant role. Shorter intervals between food presentations led to more rapid and defined conditioning.

- Once a behavior was established, the interval between reinforcements could be increased without diminishing the behavior.

Superstitious Behavior:

The pigeons began to act as if their behaviors had a direct effect on the presentation of food, even though there was no such connection. This is likened to human superstitions, where rituals are believed to change outcomes, even if they have no real effect.

For example, a card player might have rituals to change their luck, or a bowler might make gestures believing they can influence a ball already in motion.

Conclusion:

This experiment demonstrates that behaviors can be conditioned even without a direct cause-and-effect relationship. Just like humans, pigeons can develop “superstitious” behaviors based on coincidental occurrences.

This study not only illuminates the intricacies of operant conditioning but also draws parallels between animal and human behaviors in the face of random reinforcements.

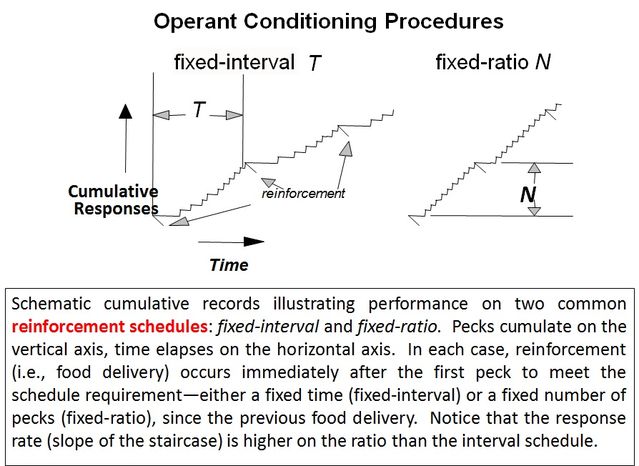

Schedules of Reinforcement

Imagine a rat in a “Skinner box.” In operant conditioning, if no food pellet is delivered immediately after the lever is pressed, then after several attempts, the rat stops pressing the lever (how long would someone continue to go to work if their employer stopped paying them?). The behavior has been extinguished.

Behaviorists discovered that different patterns (or schedules) of reinforcement had different effects on the speed of learning and extinction. Ferster and Skinner (1957) devised different ways of delivering reinforcement and found that this had effects on

1. The Response Rate – The rate at which the rat pressed the lever (i.e., how hard the rat worked).

2. The Extinction Rate – The rate at which lever pressing dies out (i.e., how soon the rat gave up).

Skinner found that variable-ratio reinforcement produces the slowest rate of extinction (i.e., people will continue repeating the behavior for the longest time without reinforcement). The type of reinforcement with the quickest rate of extinction is continuous reinforcement.

(A) Continuous Reinforcement

An animal or human is positively reinforced every time a specific behavior occurs, e.g., every time a lever is pressed, a pellet is delivered, and then food delivery is shut off.

- Response rate is SLOW

- Extinction rate is FAST

(B) Fixed Ratio Reinforcement

Behavior is reinforced only after the behavior occurs a specified number of times. e.g., one reinforcement is given after every so many correct responses, e.g., after every 5th response. For example, a child receives a star for every five words spelled correctly.

- Response rate is FAST

- Extinction rate is MEDIUM

(C) Fixed Interval Reinforcement

One reinforcement is given after a fixed time interval providing at least one correct response has been made. An example is being paid by the hour. Another example would be every 15 minutes (half hour, hour, etc.) a pellet is delivered (providing at least one lever press has been made) then food delivery is shut off.

- Response rate is MEDIUM

(D) Variable Ratio Reinforcement

behavior is reinforced after an unpredictable number of times. For example, gambling or fishing.

- Extinction rate is SLOW (very hard to extinguish because of unpredictability)

(E) Variable Interval Reinforcement

Providing one correct response has been made, reinforcement is given after an unpredictable amount of time has passed, e.g., on average every 5 minutes. An example is a self-employed person being paid at unpredictable times.

- Extinction rate is SLOW

Applications In Psychology

1. behavior modification therapy.

Behavior modification is a set of therapeutic techniques based on operant conditioning (Skinner, 1938, 1953). The main principle comprises changing environmental events that are related to a person’s behavior. For example, the reinforcement of desired behaviors and ignoring or punishing undesired ones.

This is not as simple as it sounds — always reinforcing desired behavior, for example, is basically bribery.

There are different types of positive reinforcements. Primary reinforcement is when a reward strengths a behavior by itself. Secondary reinforcement is when something strengthens a behavior because it leads to a primary reinforcer.

Examples of behavior modification therapy include token economy and behavior shaping.

Token Economy

Token economy is a system in which targeted behaviors are reinforced with tokens (secondary reinforcers) and later exchanged for rewards (primary reinforcers).

Tokens can be in the form of fake money, buttons, poker chips, stickers, etc. While the rewards can range anywhere from snacks to privileges or activities. For example, teachers use token economy at primary school by giving young children stickers to reward good behavior.

Token economy has been found to be very effective in managing psychiatric patients . However, the patients can become over-reliant on the tokens, making it difficult for them to adjust to society once they leave prison, hospital, etc.

Staff implementing a token economy program have a lot of power. It is important that staff do not favor or ignore certain individuals if the program is to work. Therefore, staff need to be trained to give tokens fairly and consistently even when there are shift changes such as in prisons or in a psychiatric hospital.

Behavior Shaping

A further important contribution made by Skinner (1951) is the notion of behavior shaping through successive approximation.

Skinner argues that the principles of operant conditioning can be used to produce extremely complex behavior if rewards and punishments are delivered in such a way as to encourage move an organism closer and closer to the desired behavior each time.

In shaping, the form of an existing response is gradually changed across successive trials towards a desired target behavior by rewarding exact segments of behavior.

To do this, the conditions (or contingencies) required to receive the reward should shift each time the organism moves a step closer to the desired behavior.

According to Skinner, most animal and human behavior (including language) can be explained as a product of this type of successive approximation.

2. Educational Applications

In the conventional learning situation, operant conditioning applies largely to issues of class and student management, rather than to learning content. It is very relevant to shaping skill performance.

A simple way to shape behavior is to provide feedback on learner performance, e.g., compliments, approval, encouragement, and affirmation.

A variable-ratio produces the highest response rate for students learning a new task, whereby initial reinforcement (e.g., praise) occurs at frequent intervals, and as the performance improves reinforcement occurs less frequently, until eventually only exceptional outcomes are reinforced.

For example, if a teacher wanted to encourage students to answer questions in class they should praise them for every attempt (regardless of whether their answer is correct). Gradually the teacher will only praise the students when their answer is correct, and over time only exceptional answers will be praised.

Unwanted behaviors, such as tardiness and dominating class discussion can be extinguished through being ignored by the teacher (rather than being reinforced by having attention drawn to them). This is not an easy task, as the teacher may appear insincere if he/she thinks too much about the way to behave.

Knowledge of success is also important as it motivates future learning. However, it is important to vary the type of reinforcement given so that the behavior is maintained.

This is not an easy task, as the teacher may appear insincere if he/she thinks too much about the way to behave.

Operant Conditioning vs. Classical Conditioning

Learning type.

While both types of conditioning involve learning, classical conditioning is passive (automatic response to stimuli), while operant conditioning is active (behavior is influenced by consequences).

- Classical conditioning links an involuntary response with a stimulus. It happens passively on the part of the learner, without rewards or punishments. An example is a dog salivating at the sound of a bell associated with food.

- Operant conditioning connects voluntary behavior with a consequence. Operant conditioning requires the learner to actively participate and perform some type of action to be rewarded or punished. It’s active, with the learner’s behavior influenced by rewards or punishments. An example is a dog sitting on command to get a treat.

Learning Process

Classical conditioning involves learning through associating stimuli resulting in involuntary responses, while operant conditioning focuses on learning through consequences, shaping voluntary behaviors.

Over time, the person responds to the neutral stimulus as if it were the unconditioned stimulus, even when presented alone. The response is involuntary and automatic.

An example is a dog salivating (response) at the sound of a bell (neutral stimulus) after it has been repeatedly paired with food (unconditioned stimulus).

Behavior followed by pleasant consequences (rewards) is more likely to be repeated, while behavior followed by unpleasant consequences (punishments) is less likely to be repeated.

For instance, if a child gets praised (pleasant consequence) for cleaning their room (behavior), they’re more likely to clean their room in the future.

Conversely, if they get scolded (unpleasant consequence) for not doing their homework, they’re more likely to complete it next time to avoid the scolding.

Timing of Stimulus & Response

The timing of the response relative to the stimulus differs between classical and operant conditioning:

Classical Conditioning (response after the stimulus) : In this form of conditioning, the response occurs after the stimulus. The behavior (response) is determined by what precedes it (stimulus).

For example, in Pavlov’s classic experiment, the dogs started to salivate (response) after they heard the bell (stimulus) because they associated it with food.

The anticipated consequence influences the behavior or what follows it. It is a more active form of learning, where behaviors are reinforced or punished, thus influencing their likelihood of repetition.

For example, a child might behave well (behavior) in anticipation of a reward (consequence), or avoid a certain behavior to prevent a potential punishment.

Looking at Skinner’s classic studies on pigeons’ and rats’ behavior, we can identify some of the major assumptions of the behaviorist approach .

• Psychology should be seen as a science , to be studied in a scientific manner. Skinner’s study of behavior in rats was conducted under carefully controlled laboratory conditions . • Behaviorism is primarily concerned with observable behavior, as opposed to internal events like thinking and emotion. Note that Skinner did not say that the rats learned to press a lever because they wanted food. He instead concentrated on describing the easily observed behavior that the rats acquired. • The major influence on human behavior is learning from our environment. In the Skinner study, because food followed a particular behavior the rats learned to repeat that behavior, e.g., operant conditioning. • There is little difference between the learning that takes place in humans and that in other animals. Therefore research (e.g., operant conditioning) can be carried out on animals (Rats / Pigeons) as well as on humans. Skinner proposed that the way humans learn behavior is much the same as the way the rats learned to press a lever.

So, if your layperson’s idea of psychology has always been of people in laboratories wearing white coats and watching hapless rats try to negotiate mazes to get to their dinner, then you are probably thinking of behavioral psychology.

Behaviorism and its offshoots tend to be among the most scientific of the psychological perspectives . The emphasis of behavioral psychology is on how we learn to behave in certain ways.

We are all constantly learning new behaviors and how to modify our existing behavior. Behavioral psychology is the psychological approach that focuses on how this learning takes place.

Critical Evaluation

Operant conditioning can explain a wide variety of behaviors, from the learning process to addiction and language acquisition . It also has practical applications (such as token economy) that can be used in classrooms, prisons, and psychiatric hospitals.

Researchers have found innovative ways to apply operant conditioning principles to promote health and habit change in humans.

In a recent study, operant conditioning using virtual reality (VR) helped stroke patients use their weakened limb more often during rehabilitation. Patients shifted their weight in VR games by maneuvering a virtual object. When they increased weight on their weakened side, they received rewards like stars. This positive reinforcement conditioned greater paretic limb use (Kumar et al., 2019).

Another study utilized operant conditioning to assist smoking cessation. Participants earned vouchers exchangeable for goods and services for reducing smoking. This reward system reinforced decreasing cigarette use. Many participants achieved long-term abstinence (Dallery et al., 2017).

Through repeated reinforcement, operant conditioning can facilitate forming exercise and eating habits. A person trying to exercise more might earn TV time for every 10 minutes spent working out. An individual aiming to eat healthier may allow themselves a daily dark chocolate square for sticking to nutritious meals. Providing consistent rewards for desired actions can instill new habits (Michie et al., 2009).

Apps like Habitica apply operant conditioning by gamifying habit tracking. Users earn points and collect rewards in a fantasy game for completing real-life habits. This virtual reinforcement helps ingrain positive behaviors (Eckerstorfer et al., 2019).

Operant conditioning also shows promise for managing ADHD and OCD. Rewarding concentration and focus in ADHD children, for example, can strengthen their attention skills (Rosén et al., 2018). Similarly, reinforcing OCD patients for resisting compulsions may diminish obsessive behaviors (Twohig et al., 2018).

However, operant conditioning fails to take into account the role of inherited and cognitive factors in learning, and thus is an incomplete explanation of the learning process in humans and animals.

For example, Kohler (1924) found that primates often seem to solve problems in a flash of insight rather than be trial and error learning. Also, social learning theory (Bandura, 1977) suggests that humans can learn automatically through observation rather than through personal experience.

The use of animal research in operant conditioning studies also raises the issue of extrapolation. Some psychologists argue we cannot generalize from studies on animals to humans as their anatomy and physiology are different from humans, and they cannot think about their experiences and invoke reason, patience, memory or self-comfort.

Frequently Asked Questions

Who discovered operant conditioning.

Operant conditioning was discovered by B.F. Skinner, an American psychologist, in the mid-20th century. Skinner is often regarded as the father of operant conditioning, and his work extensively dealt with the mechanism of reward and punishment for behaviors, with the concept being that behaviors followed by positive outcomes are reinforced, while those followed by negative outcomes are discouraged.

How does operant conditioning differ from classical conditioning?

Operant conditioning differs from classical conditioning, focusing on how voluntary behavior is shaped and maintained by consequences, such as rewards and punishments.

In operant conditioning, a behavior is strengthened or weakened based on the consequences that follow it. In contrast, classical conditioning involves the association of a neutral stimulus with a natural response, creating a new learned response.

While both types of conditioning involve learning and behavior modification, operant conditioning emphasizes the role of reinforcement and punishment in shaping voluntary behavior.

How does operant conditioning relate to social learning theory?

Operant conditioning is a core component of social learning theory , which emphasizes the importance of observational learning and modeling in acquiring and modifying behavior.

Social learning theory suggests that individuals can learn new behaviors by observing others and the consequences of their actions, which is similar to the reinforcement and punishment processes in operant conditioning.

By observing and imitating models, individuals can acquire new skills and behaviors and modify their own behavior based on the outcomes they observe in others.

Overall, both operant conditioning and social learning theory highlight the importance of environmental factors in shaping behavior and learning.

What are the downsides of operant conditioning?

The downsides of using operant conditioning on individuals include the potential for unintended negative consequences, particularly with the use of punishment. Punishment may lead to increased aggression or avoidance behaviors.

Additionally, some behaviors may be difficult to shape or modify using operant conditioning techniques, particularly when they are highly ingrained or tied to complex internal states.

Furthermore, individuals may resist changing their behaviors to meet the expectations of others, particularly if they perceive the demands or consequences of the reinforcement or punishment to be undesirable or unjust.

What is an application of bf skinner’s operant conditioning theory?

An application of B.F. Skinner’s operant conditioning theory is seen in education and classroom management. Teachers use positive reinforcement (rewards) to encourage good behavior and academic achievement, and negative reinforcement or punishment to discourage disruptive behavior.

For example, a student may earn extra recess time (positive reinforcement) for completing homework on time, or lose the privilege to use class computers (negative punishment) for misbehavior.

Further Reading

- Ivan Pavlov Classical Conditioning Learning and behavior PowerPoint

- Ayllon, T., & Michael, J. (1959). The psychiatric nurse as a behavioral engineer. Journal of the Experimental Analysis of Behavior, 2(4), 323-334.

- Bandura, A. (1977). Social learning theory . Englewood Cliffs, NJ: Prentice Hall.

- Dallery, J., Meredith, S., & Glenn, I. M. (2017). A deposit contract method to deliver abstinence reinforcement for cigarette smoking. Journal of Applied Behavior Analysis, 50 (2), 234–248.

- Eckerstorfer, L., Tanzer, N. K., Vogrincic-Haselbacher, C., Kedia, G., Brohmer, H., Dinslaken, I., & Corbasson, R. (2019). Key elements of mHealth interventions to successfully increase physical activity: Meta-regression. JMIR mHealth and uHealth, 7 (11), e12100.

- Ferster, C. B., & Skinner, B. F. (1957). Schedules of reinforcement . New York: Appleton-Century-Crofts.

- Kohler, W. (1924). The mentality of apes. London: Routledge & Kegan Paul.

- Kumar, D., Sinha, N., Dutta, A., & Lahiri, U. (2019). Virtual reality-based balance training system augmented with operant conditioning paradigm. Biomedical Engineering Online , 18 (1), 1-23.

- Michie, S., Abraham, C., Whittington, C., McAteer, J., & Gupta, S. (2009). Effective techniques in healthy eating and physical activity interventions: A meta-regression. Health Psychology, 28 (6), 690–701.

- Rosén, E., Westerlund, J., Rolseth, V., Johnson R. M., Viken Fusen, A., Årmann, E., Ommundsen, R., Lunde, L.-K., Ulleberg, P., Daae Zachrisson, H., & Jahnsen, H. (2018). Effects of QbTest-guided ADHD treatment: A randomized controlled trial. European Child & Adolescent Psychiatry, 27 (4), 447–459.

- Skinner, B. F. (1948). ‘Superstition’in the pigeon. Journal of experimental psychology , 38 (2), 168.

- Schunk, D. (2016). Learning theories: An educational perspective . Pearson.

- Skinner, B. F. (1938). The behavior of organisms: An experimental analysis . New York: Appleton-Century.

- Skinner, B. F. (1948). Superstition” in the pigeon . Journal of Experimental Psychology, 38 , 168-172.

- Skinner, B. F. (1951). How to teach animals . Freeman.

- Skinner, B. F. (1953). Science and human behavior . Macmillan.

- Thorndike, E. L. (1898). Animal intelligence: An experimental study of the associative processes in animals. Psychological Monographs: General and Applied, 2(4), i-109.

- Twohig, M. P., Whittal, M. L., Cox, J. M., & Gunter, R. (2010). An initial investigation into the processes of change in ACT, CT, and ERP for OCD. International Journal of Behavioral Consultation and Therapy, 6 (2), 67–83.

- Watson, J. B. (1913). Psychology as the behaviorist views it . Psychological Review, 20 , 158–177.

An official website of the United States government

Official websites use .gov A .gov website belongs to an official government organization in the United States.

Secure .gov websites use HTTPS A lock ( Lock Locked padlock icon ) or https:// means you've safely connected to the .gov website. Share sensitive information only on official, secure websites.

- Publications

- Account settings

- Advanced Search

- Journal List

O perant C onditioning

J e r staddon, d t cerutti.

- Author information

- Article notes

- Copyright and License information

Issue date 2003.

Operant behavior is behavior “controlled” by its consequences. In practice, operant conditioning is the study of reversible behavior maintained by reinforcement schedules. We review empirical studies and theoretical approaches to two large classes of operant behavior: interval timing and choice. We discuss cognitive versus behavioral approaches to timing, the “gap” experiment and its implications, proportional timing and Weber's law, temporal dynamics and linear waiting, and the problem of simple chain-interval schedules. We review the long history of research on operant choice: the matching law, its extensions and problems, concurrent chain schedules, and self-control. We point out how linear waiting may be involved in timing, choice, and reinforcement schedules generally. There are prospects for a unified approach to all these areas.

Keywords: interval timing, choice, concurrent schedules, matching law, self-control

INTRODUCTION

The term operant conditioning 1 was coined by B. F. Skinner in 1937 in the context of reflex physiology, to differentiate what he was interested in—behavior that affects the environment—from the reflex-related subject matter of the Pavlovians. The term was novel, but its referent was not entirely new. Operant behavior , though defined by Skinner as behavior “controlled by its consequences” is in practice little different from what had previously been termed “instrumental learning” and what most people would call habit. Any well-trained “operant” is in effect a habit. What was truly new was Skinner's method of automated training with intermittent reinforcement and the subject matter of reinforcement schedules to which it led. Skinner and his colleagues and students discovered in the ensuing decades a completely unsuspected range of powerful and orderly schedule effects that provided new tools for understanding learning processes and new phenomena to challenge theory.

A reinforcement schedule is any procedure that delivers a reinforcer to an organism according to some well-defined rule. The usual reinforcer is food for a hungry rat or pigeon; the usual schedule is one that delivers the reinforcer for a switch closure caused by a peck or lever press. Reinforcement schedules have also been used with human subjects, and the results are broadly similar to the results with animals. However, for ethical and practical reasons, relatively weak reinforcers must be used—and the range of behavioral strategies people can adopt is of course greater than in the case of animals. This review is restricted to work with animals.

Two types of reinforcement schedule have excited the most interest. Most popular are time-based schedules such as fixed and variable interval, in which the reinforcer is delivered after a fixed or variable time period after a time marker (usually the preceding reinforcer). Ratio schedules require a fixed or variable number of responses before a reinforcer is delivered.

Trial-by-trial versions of all these free-operant procedures exist. For example, a version of the fixed-interval schedule specifically adapted to the study of interval timing is the peak-interval procedure, which adds to the fixed interval an intertrial interval (ITI) preceding each trial and a percentage of extra-long “empty” trials in which no food is given.

For theoretical reasons, Skinner believed that operant behavior ought to involve a response that can easily be repeated, such as pressing a lever, for rats, or pecking an illuminated disk (key) for pigeons. The rate of such behavior was thought to be important as a measure of response strength ( Skinner 1938 , 1966 , 1986 ; Killeen & Hall 2001 ). The current status of this assumption is one of the topics of this review. True or not, the emphasis on response rate has resulted in a dearth of experimental work by operant conditioners on nonrecurrent behavior such as movement in space.

Operant conditioning differs from other kinds of learning research in one important respect. The focus has been almost exclusively on what is called reversible behavior, that is, behavior in which the steady-state pattern under a given schedule is stable, meaning that in a sequence of conditions, XAXBXC…, where each condition is maintained for enough days that the pattern of behavior is locally stable, behavior under schedule X shows a pattern after one or two repetitions of X that is always the same. For example, the first time an animal is exposed to a fixed-interval schedule, after several daily sessions most animals show a “scalloped” pattern of responding (call it pattern A): a pause after each food delivery—also called wait time or latency —followed by responding at an accelerated rate until the next food delivery. However, some animals show negligible wait time and a steady rate (pattern B). If all are now trained on some other procedure—a variable-interval schedule, for example—and then after several sessions are returned to the fixed-interval schedule, almost all the animals will revert to pattern A. Thus, pattern A is the stable pattern. Pattern B, which may persist under unchanging conditions but does not recur after one or more intervening conditions, is sometimes termed metastable ( Staddon 1965 ). The vast majority of published studies in operant conditioning are on behavior that is stable in this sense.

Although the theoretical issue is not a difficult one, there has been some confusion about what the idea of stability (reversibility) in behavior means. It should be obvious that the animal that shows pattern A after the second exposure to procedure X is not the same animal as when it showed pattern A on the first exposure. Its experimental history is different after the second exposure than after the first. If the animal has any kind of memory, therefore, its internal state 2 following the second exposure is likely to be different than after the first exposure, even though the observed behavior is the same. The behavior is reversible; the organism's internal state in general is not. The problems involved in studying nonreversible phenomena in individual organisms have been spelled out elsewhere (e.g., Staddon 2001a , Ch. 1); this review is mainly concerned with the reversible aspects of behavior.

Once the microscope was invented, microorganisms became a new field of investigation. Once automated operant conditioning was invented, reinforcement schedules became an independent subject of inquiry. In addition to being of great interest in their own right, schedules have also been used to study topics defined in more abstract ways such as timing and choice. These two areas constitute the majority of experimental papers in operant conditioning with animal subjects during the past two decades. Great progress has been made in understanding free-operant choice behavior and interval timing. Yet several theories of choice still compete for consensus, and much the same is true of interval timing. In this review we attempt to summarize the current state of knowledge in these two areas, to suggest how common principles may apply in both, and to show how these principles may also apply to reinforcement schedule behavior considered as a topic in its own right.

INTERVAL TIMING

Interval timing is defined in several ways. The simplest is to define it as covariation between a dependent measure such as wait time and an independent measure such as interreinforcement interval (on fixed interval) or trial time-to-reinforcement (on the peak procedure). When interreinforcement interval is doubled, then after a learning period wait time also approximately doubles ( proportional timing ). This is an example of what is sometimes called a time production procedure: The organism produces an approximation to the to-be-timed interval. There are also explicit time discrimination procedures in which on each trial the subject is exposed to a stimulus and is then required to respond differentially depending on its absolute ( Church & Deluty 1977 , Stubbs 1968 ) or even relative ( Fetterman et al. 1989 ) duration. For example, in temporal bisection , the subject (e.g., a rat) experiences either a 10-s or a 2-s stimulus, L or S . After the stimulus goes off, the subject is confronted with two choices. If the stimulus was L , a press on the left lever yields food; if S , a right press gives food; errors produce a brief time-out. Once the animal has learned, stimuli of intermediate duration are presented in lieu of S and L on test trials. The question is, how will the subject distribute its responses? In particular, at what intermediate duration will it be indifferent between the two choices? [Answer: typically in the vicinity of the geometric mean, i.e., √( L.S ) − 4.47 for 2 and 10.]

Wait time is a latency; hence (it might be objected) it may vary on time-production procedures like fixed interval because of factors other than timing—such as degree of hunger (food deprivation). Using a time-discrimination procedure avoids this problem. It can also be mitigated by using the peak procedure and looking at performance during “empty” trials. “Filled” trials terminate with food reinforcement after (say) T s. “Empty” trials, typically 3 T s long, contain no food and end with the onset of the ITI. During empty trials the animal therefore learns to wait, then respond, then stop (more or less) until the end of the trial ( Catania 1970 ). The mean of the distribution of response rates averaged over empty trials ( peak time ) is then perhaps a better measure of timing than wait time because motivational variables are assumed to affect only the height and spread of the response-rate distribution, not its mean. This assumption is only partially true ( Grace & Nevin 2000 , MacEwen & Killeen 1991 , Plowright et al. 2000 ).

There is still some debate about the actual pattern of behavior on the peak procedure in each individual trial. Is it just wait, respond at a constant rate, then wait again? Or is there some residual responding after the “stop” [yes, usually (e.g., Church et al. 1991 )]? Is the response rate between start and stop really constant or are there two or more identifiable rates ( Cheng & Westwood 1993 , Meck et al. 1984 )? Nevertheless, the method is still widely used, particularly by researchers in the cognitive/psychophysical tradition. The idea behind this approach is that interval timing is akin to sensory processes such as the perception of sound intensity (loudness) or luminance (brightness). As there is an ear for hearing and an eye for seeing, so (it is assumed) there must be a (real, physiological) clock for timing. Treisman (1963) proposed the idea of an internal pacemaker-driven clock in the context of human psychophysics. Gibbon (1977) further developed the approach and applied it to animal interval-timing experiments.

WEBER'S LAW, PROPORTIONAL TIMING AND TIMESCALE INVARIANCE

The major similarity between acknowledged sensory processes, such as brightness perception, and interval timing is Weber's law . Peak time on the peak procedure is not only proportional to time-to-food ( T ), its coefficient of variation (standard deviation divided by mean) is approximately constant, a result similar to Weber's law obeyed by most sensory dimensions. This property has been called scalar timing ( Gibbon 1977 ). Most recently, Gallistel & Gibbon (2000) have proposed a grand principle of timescale invariance , the idea that the frequency distribution of any given temporal measure (the idea is assumed to apply generally, though in fact most experimental tests have used peak time) scales with the to-be-timed-interval. Thus, given the normalized peak-time distribution for T =60 s, say; if the x -axis is divided by 2, it will match the distribution for T = 30 s. In other words, the frequency distribution for the temporal dependent variable, normalized on both axes, is asserted to be invariant.

Timescale invariance is in effect a combination of Weber's law and proportional timing. Like those principles, it is only approximately true. There are three kinds of evidence that limit its generality. The simplest is the steady-state pattern of responding (key-pecking or lever-pressing) observed on fixed-interval reinforcement schedules. This pattern should be the same at all fixed-interval values, but it is not. Gallistel & Gibbon wrote, “When responding on such a schedule, animals pause after each reinforcement and then resume responding after some interval has elapsed. It was generally supposed that the animals' rate of responding accelerated throughout the remainder of the interval leading up to reinforcement. In fact, however, conditioned responding in this paradigm … is a two-state variable (slow, sporadic pecking vs. rapid, steady pecking), with one transition per interreinforcement interval ( Schneider 1969 )” (p. 293).

This conclusion over-generalizes Schneider's result. Reacting to reports of “break-and-run” fixed-interval performance under some conditions, Schneider sought to characterize this feature more objectively than the simple inspection of cumulative records. He found a way to identify the point of maximum acceleration in the fixed-interval “scallop” by using an iterative technique analogous to attaching an elastic band to the beginning of an interval and the end point of the cumulative record, then pushing a pin, representing the break point, against the middle of the band until the two resulting straight-line segments best fit the cumulative record (there are other ways to achieve the same result that do not fix the end points of the two line-segments). The postreinforcement time ( x -coordinate) of the pin then gives the break point for that interval. Schneider showed that the break point is an orderly dependent measure: Break point is roughly 0.67 of interval duration, with standard deviation proportional to the mean (the Weber-law or scalar property).

This finding is by no means the same as the idea that the fixed-interval scallop is “a two-state variable” ( Hanson & Killeen 1981 ). Schneider showed that a two-state model is an adequate approximation; he did not show that it is the best or truest approximation. A three- or four-line approximation (i.e., two or more pins) might well have fit significantly better than the two-line version. To show that the process is two-state, Schneider would have had to show that adding additional segments produced negligibly better fit to the data.

The frequent assertion that the fixed-interval scallop is always an artifact of averaging flies in the face of raw cumulative-record data“the many nonaveraged individual fixed-interval cumulative records in Ferster & Skinner (1957 , e.g., pp. 159, 160, 162), which show clear curvature, particularly at longer fixed-interval values (> ∼2 min). The issue for timescale invariance, therefore, is whether the shape, or relative frequency of different-shaped records, is the same at different absolute intervals.

The evidence is that there is more, and more frequent, curvature at longer intervals. Schneider's data show this effect. In Schneider's Figure 3, for example, the time to shift from low to high rate is clearly longer at longer intervals than shorter ones. On fixed-interval schedules, apparently, absolute duration does affect the pattern of responding. (A possible reason for this dependence of the scallop on fixed-interval value is described in Staddon 2001a , p. 317. The basic idea is that greater curvature at longer fixed-interval values follows from two things: a linear increase in response probability across the interval, combined with a nonlinear, negatively accelerated, relation between overall response rate and reinforcement rate.) If there is a reliable difference in the shape, or distribution of shapes, of cumulative records at long and short fixed-interval values, the timescale-invariance principle is violated.

A second dataset that does not agree with timescale invariance is an extensive set of studies on the peak procedure by Zeiler & Powell (1994 ; see also Hanson & Killeen 1981) , who looked explicitly at the effect of interval duration on various measures of interval timing. They conclude, “Quantitative properties of temporal control depended on whether the aspect of behavior considered was initial pause duration, the point of maximum acceleration in responding [break point], the point of maximum deceleration, the point at which responding stopped, or several different statistical derivations of a point of maximum responding … . Existing theory does not explain why Weber's law [the scalar property] so rarely fit the results …” (p. 1; see also Lowe et al. 1979 , Wearden 1985 for other exceptions to proportionality between temporal measures of behavior and interval duration). Like Schneider (1969) and Hanson & Killeen (1981) , Zeiler & Powell found that the break point measure was proportional to interval duration, with scalar variance (constant coefficient of variation), and thus consistent with timescale invariance, but no other measure fit the rule.

Moreover, the fit of the breakpoint measure is problematic because it is not a direct measure of behavior but is itself the result of a statistical fitting procedure. It is possible, therefore, that the fit of breakpoint to timescale invariance owes as much to the statistical method used to arrive at it as to the intrinsic properties of temporal control. Even if this caveat turns out to be false, the fact that every other measure studied by Zeiler & Powell failed to conform to timescale invariance surely rules it out as a general principle of interval timing.

The third and most direct test of the timescale invariance idea is an extensive series of time-discrimination experiments carried out by Dreyfus et al. (1988) and Stubbs et al. (1994) . The usual procedure in these experiments was for pigeons to peck a center response key to produce a red light of one duration that is followed immediately by a green light of another duration. When the green center-key light goes off, two yellow side-keys light up. The animals are reinforced with food for pecking the left side-key if the red light was longer, the right side-key if the green light was longer.

The experimental question is, how does discrimination accuracy depend on relative and absolute duration of the two stimuli? Timescale invariance predicts that accuracy depends only on the ratio of red and green durations: For example, accuracy should be the same following the sequence red:10, green:20 as the sequence red:30, green:60, but it is not. Pigeons are better able to discriminate between the two short durations than the two long ones, even though their ratio is the same. Dreyfus et al. and Stubbs et al. present a plethora of quantitative data of the same sort, all showing that time discrimination depends on absolute as well as relative duration.

Timescale invariance is empirically indistinguishable from Weber's law as it applies to time, combined with the idea of proportional timing: The mean of a temporal dependent variable is proportional to the temporal independent variable. But Weber's law and proportional timing are dissociable—it is possible to have proportional timing without conforming to Weber's law and vice versa (cf. Hanson & Killeen 1981 , Zeiler & Powell 1994 ), and in any case both are only approximately true. Timescale invariance therefore does not qualify as a principle in its own right.

Cognitive and Behavioral Approaches to Timing

The cognitive approach to timing dates from the late 1970s. It emphasizes the psychophysical properties of the timing process and the use of temporal dependent variables as measures of (for example) drug effects and the effects of physiological interventions. It de-emphasizes proximal environmental causes. Yet when timing (then called temporal control; see Zeiler 1977 for an early review) was first discovered by operant conditioners (Pavlov had studied essentially the same phenomenon— delay conditioning —many years earlier), the focus was on the time marker , the stimulus that triggered the temporally correlated behavior. (That is one virtue of the term control : It emphasizes the fact that interval timing behavior is usually not free-running. It must be cued by some aspect of the environment.) On so-called spaced-responding schedules, for example, the response is the time marker: The subject must learn to space its responses more than T s apart to get food. On fixed-interval schedules the time marker is reinforcer delivery; on the peak procedure it is the stimulus events associated with trial onset. This dependence on a time marker is especially obvious on time-production procedures, but on time-discrimination procedures the subject's choice behavior must also be under the control of stimuli associated with the onset and offset of the sample duration.

Not all stimuli are equally effective as time markers. For example, an early study by Staddon & Innis (1966a ; see also 1969) showed that if, on alternate fixed intervals, 50% of reinforcers (F) are omitted and replaced by a neutral stimulus (N) of the same duration, wait time following N is much shorter than after F (the reinforcement-omission effect ). Moreover, this difference persists indefinitely. Despite the fact that F and N have the same temporal relationship to the reinforcer, F is much more effective as a time marker than N. No exactly comparable experiment has been done using the peak procedure, partly because the time marker there involves ITI offset/trial onset rather than the reinforcer delivery, so that there is no simple manipulation equivalent to reinforcement omission.

These effects do not depend on the type of behavior controlled by the time marker. On fixed-interval schedules the time marker is in effect inhibitory: Responding is suppressed during the wait time and then occurs at an accelerating rate. Other experiments ( Staddon 1970 , 1972 ), however, showed that given the appropriate schedule, the time marker can control a burst of responding (rather than a wait) of a duration proportional to the schedule parameters ( temporal go–no-go schedules) and later experiments have shown that the place of responding can be controlled by time since trial onset in the so-called tri-peak procedure ( Matell & Meck 1999 ).

A theoretical review ( Staddon 1974 ) concluded, “Temporal control by a given time marker depends on the properties of recall and attention, that is, on the same variables that affect attention to compound stimuli and recall in memory experiments such as delayed matching-to-sample.” By far the most important variable seems to be “the value of the time-marker stimulus—Stimuli of high value … are more salient …” (p. 389), although the full range of properties that determine time-marker effectiveness is yet to be explored.

Reinforcement omission experiments are transfer tests , that is, tests to identify the effective stimulus. They pinpoint the stimulus property controlling interval timing—the effective time marker—by selectively eliminating candidate properties. For example, in a definitive experiment, Kello (1972) showed that on fixed interval the wait time is longest following standard reinforcer delivery (food hopper activated with food, hopper light on, house light off, etc.). Omission of any of those elements caused the wait time to decrease, a result consistent with the hypothesis that reinforcer delivery acquires inhibitory temporal control over the wait time. The only thing that makes this situation different from the usual generalization experiment is that the effects of reinforcement omission are relatively permanent. In the usual generalization experiment, delivery of the reinforcer according to the same schedule in the presence of both the training stimulus and the test stimuli would soon lead all to be responded to in the same way. Not so with temporal control: As we just saw, even though N and F events have the same temporal relationship to the next food delivery, animals never learn to respond similarly after both. The only exception is when the fixed-interval is relatively short, on the order of 20 s or less ( Starr & Staddon 1974 ). Under these conditions pigeons are able to use a brief neutral stimulus as a time marker on fixed interval.

The Gap Experiment

The closest equivalent to fixed-interval reinforcement–omission using the peak procedure is the so-called gap experiment ( Roberts 1981 ). In the standard gap paradigm the sequence of stimuli in a training trial (no gap stimulus) consists of three successive stimuli: the intertrial interval stimulus (ITI), the fixed-duration trial stimulus (S), and food reinforcement (F), which ends each training trial. The sequence is thus ITI, S, F, ITI. Training trials are typically interspersed with empty probe trials that last longer than reinforced trials but end with an ITI only and no reinforcement. The stimulus sequence on such trials is ITI, S, ITI, but the S is two or three times longer than on training trials. After performance has stabilized, gap trials are introduced into some or all of the probe trials. On gap trials the ITI stimulus reappears for a while in the middle of the trial stimulus. The sequence on gap trials is therefore ITI, S, ITI, S, ITI. Gap trials do not end in reinforcement.

What is the effective time marker (i.e., the stimulus that exerts temporal control) in such an experiment? ITI offset/trial onset is the best temporal predictor of reinforcement: Its time to food is shorter and less variable than any other experimental event. Most but not all ITIs follow reinforcement, and the ITI itself is often variable in duration and relatively long. So reinforcer delivery is a poor temporal predictor. The time marker therefore has something to do with the transition between ITI and trial onset, between ITI and S. Gap trials also involve presentation of the ITI stimulus, albeit with a different duration and within-trial location than the usual ITI, but the similarities to a regular trial are obvious. The gap experiment is therefore a sort of generalization (of temporal control) experiment. Buhusi & Meck (2000) presented gap stimuli more or less similar to the ITI stimulus during probe trials and found results resembling generalization decrement, in agreement with this analysis.

However, the gap procedure was not originally thought of as a generalization test, nor is it particularly well designed for that purpose. The gap procedure arose directly from the cognitive idea that interval timing behavior is driven by an internal clock ( Church 1978 ). From this point of view it is perfectly natural to inquire about the conditions under which the clock can be started or stopped. If the to-be-timed interval is interrupted—a gap—will the clock restart when the trial stimulus returns (reset)? Will it continue running during the gap and afterwards? Or will it stop and then restart (stop)?

“Reset” corresponds to the maximum rightward shift (from trial onset) of the response-rate peak from its usual position t s after trial onset to t + G E , where G E is the offset time (end) of the gap stimulus. Conversely, no effect (clock keeps running) leaves the peak unchanged at t , and “stop and restart” is an intermediate result, a peak shift to G E − G B + t , where G B is the time of onset (beginning) of the gap stimulus.

Both gap duration and placement within a trial have been varied. The results that have been obtained so far are rather complex (cf. Buhusi & Meck 2000 , Cabeza de Vaca et al. 1994 , Matell & Meck 1999 ). In general, the longer the gap and the later it appears in the trial, the greater the rightward peak shift. All these effects can be interpreted in clock terms, but the clock view provides no real explanation for them, because it does not specify which one will occur under a given set of conditions. The results of gap experiments can be understood in a qualitative way in terms of the similarity of the gap presentation to events associated with trial onset; the more similar, the closer the effect will be to reset, i.e., the onset of a new trial. Another resemblance between gap results and the results of reinforcement-omission experiments is that the effects of the gap are also permanent: Behavior on later trials usually does not differ from behavior on the first few ( Roberts 1981 ). These effects have been successfully simulated quantitatively by a neural network timing model ( Hopson 1999 , 2002 ) that includes the assumption that the effects of time-marker presentation decay with time ( Cabeza de Vaca et al. 1994 ).